Recon in BugBounty has always been something essential for me, however we all practice it differently with sometimes different final objectives just to obtain a list of subdomains or sometimes much heavier results such as ports, services, urls, vulnerabilities, etc.

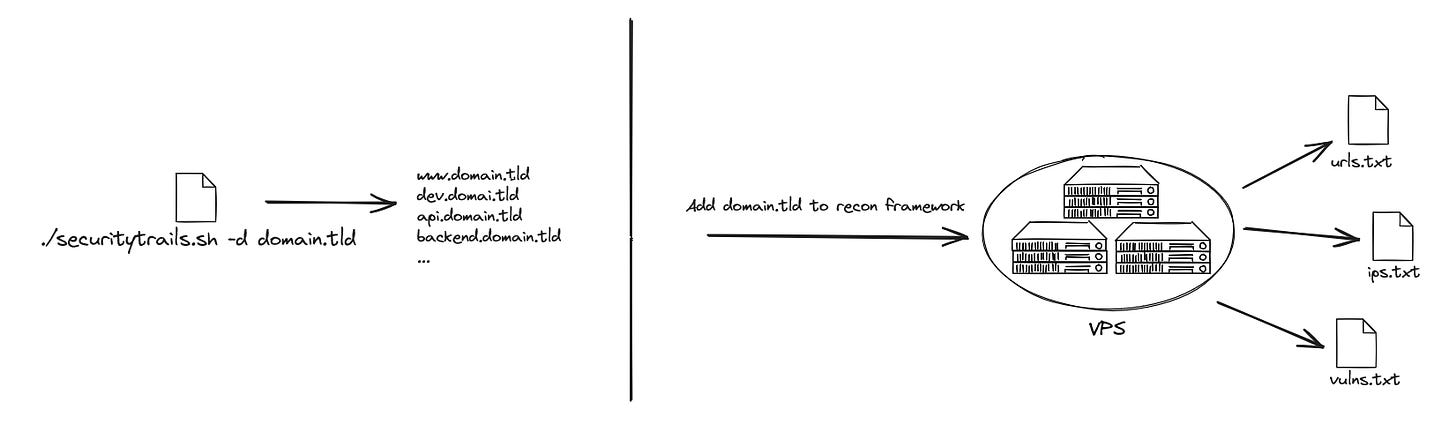

A light recon with for example just a curl request on the SecurityTrails API, a more complete recon combining several tools and for the most adventurous, a complete framework.

In my opinion, there are no bad choices, you just have to use an idea adapted to your needs and what works for you. One thing is certain, the more complete the solution, the more complex it will tend to be, particularly in its maintenance.

When combining several tools it is necessary to ensure that you always have the same behavior at the end of the chain for each update such as:

When a tool (Amass, Subfinder, …) is updated

When a 3rd party services (SecurityTrails API, VirusTotal API, ...) is updated

The more tools we add, the more we think we will obtain the best results, the reality is also that we will spend a considerable amount of time constantly making everything work and it becomes even more complicated when you want to add a web UI to your project.

A significant advantage, however, is that you generally know well / very well the tools that you use, which allows you to contribute to them !

For those who don't want to bother with all that but who want a complete solution, several tools have emerged such as ReconFTW, Rengine or Osmedeus. Or mainly thanks to ProjectDiscovery we can even couple several tools directly together which can even avoid having to develop a script.

After several attempts and development of several tools / recon solutions, it became obvious that in my case and in relation to my workflow, what I want to obtain at the end of the chain is mainly the subdomains with the associated web services.

The output must be unique and contain all the information I need in a standardized structure.

Note : For those interested, the JSON I get as output is inspired/derived from ReconJSON.

When you use your recon solution on an ad hoc basis, the machine on which the script will be executed is generally your own machine or on a VPS.

When you develop your own solution and want to be a little more "aggressive" the question of architecture is more complicated. If your framework is based on your PC or a simple VPS, launching several recons at the same time is not simple or even impossible.

Even if you have a large server, having already experienced it, launching several recons on the same machine is not a good idea, especially with DNS bruteforce or if your recon contains actions that could get you banned on a WAF /CDN

If your IP is banned on a WAF/CDN for a recon, you may miss results for other recons that use that same WAF/CDN.

So, we enter in a new spiral of endless complexity for which I do not yet have my most suitable answer myself. I will present here 3 solutions, there are others that I have not tested and also variations of these three solutions.

Solution n°1 : VPS Fleet

Develop a central solution (an API for example) which takes care of launching and retrieving the results of the recons

When a domain is added, a VPS is spawned on demand, it is a low cost solution, can be implemented.

Among the advantages we find the fact that it is not an expensive solution which also allows you to be quite flexible, depending on the need you can create a more or less powerful VPS for your recon.

It is possible to use tools like Axiom to control your fleet or to go directly through the SDK of your cloud provider.

It's a fairly simple solution to set up, a layer of complexity is added when you want to scan a large number of domains, some cloud providers like AWS do not allow (by default) to spawn more than 20 EC2 instances simultaneously. It is then necessary to add a queue mechanism to have 20 recon launched and the others to be waiting.

Among the disadvantages, having used this solution for a long time, you must constantly keep an eye on your servers because for several reasons it may happen that an instance do not stop correctly and therefore that you are billed for a server that is not used for nothing ... wasting time and money is exactly what you want to avoid.

Solution n°2 : Serverless

I started thinking about this solution a while ago and it never really convinced me although I understand that it finds its audience

This is a fairly complex topic to explain and which can have several approaches, the idea is to cut your recon into a whole bunch of small bricks which must be as light and quick as possible.

Among the advantages, you can have an extremely fast recon and if you don't abuse it, completely free ! Several cloud providers offer a free usage period based on your resource consumption. By rotating through the different cloud providers (AWS, GCP, Azure, etc.) we therefore end up with reduced costs

Note : However, this rotation also represents a technical challenge, if you want to run a serverless container on Scaleway it will not work on AWS because a specific package is necessary

But a serverless function is only intended to be executed for a maximum of 15 minutes, which therefore makes it its main disadvantage, if we refer to one of my previous articles, we see that certain steps such as the resolution DNS is simply not feasible for too large domains (and a serverless function is not originally intended for that).

The second blocking point is the knowledge necessary to implement this architecture and maintain it we find ourselves quite quickly with a complex queue management and orchestration.

An excellent example is described in article Brevity In Motion - Automated Cloud Based Recon, here is the architecture diagram of the solution :

With the necessary knowledge, you can end up with incredibly effective and inexpensive recon.

We can obviously do things much simpler but limited, for example I published FastRecon which is a tool designed to be executed as a serverless container and which allows me to obtain the HTTPX output for a recon of around 500 domains in just 2 minutes for free.

Solution n°3 : Task / Jobs

This is the ideal solution for me we find the advantages of Serverless (no server management) and the advantages of VPS instances (execute long tasks) we can define a timeout and it is a simple solution to implement.

Its only drawback is the cost ! For a 2Core / 2GB RAM VPS it cost me €0.0137 for one hour, the same thing for a job will cost me €0.08784 more than 6 times the price of my VPS.

When you don't do a lot of recon, this cost is not very important but having already had months around 100 euros with solution number 1, reflection takes more time !

To conclude, there is no good or bad choice, once again I insist on the fact that it is necessary to favor a solution adapted to your needs. Maybe this article will help you, maybe it will bring you even more confusion 😁